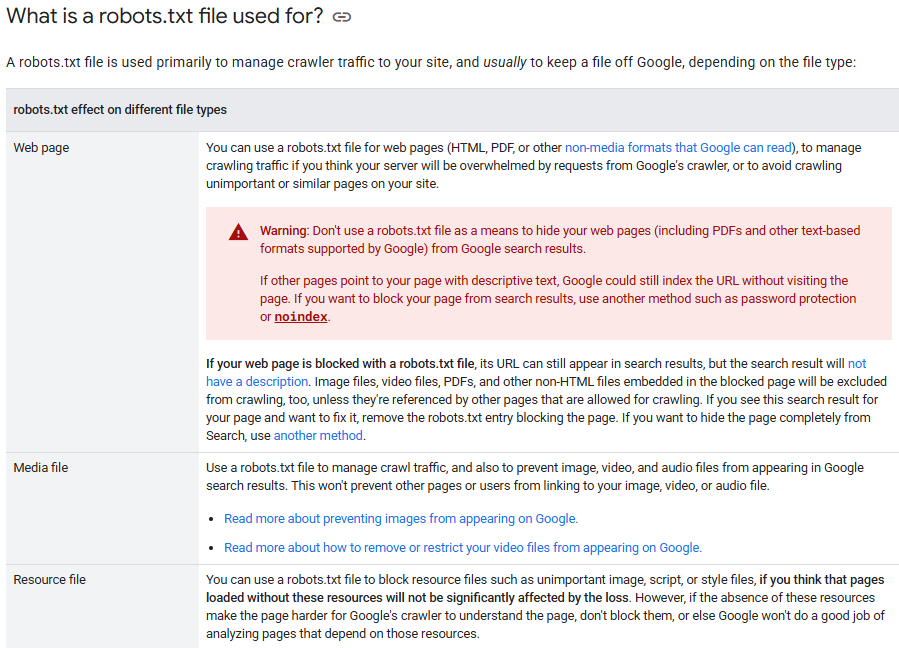

Robot.txt SEO is all about ensuring crawling efficiency and maximizing user experience for better search rankings. The Robots Exclusion Protocol (REP) i.e. robot.txt is a standard protocol for blocking unwanted URLs.

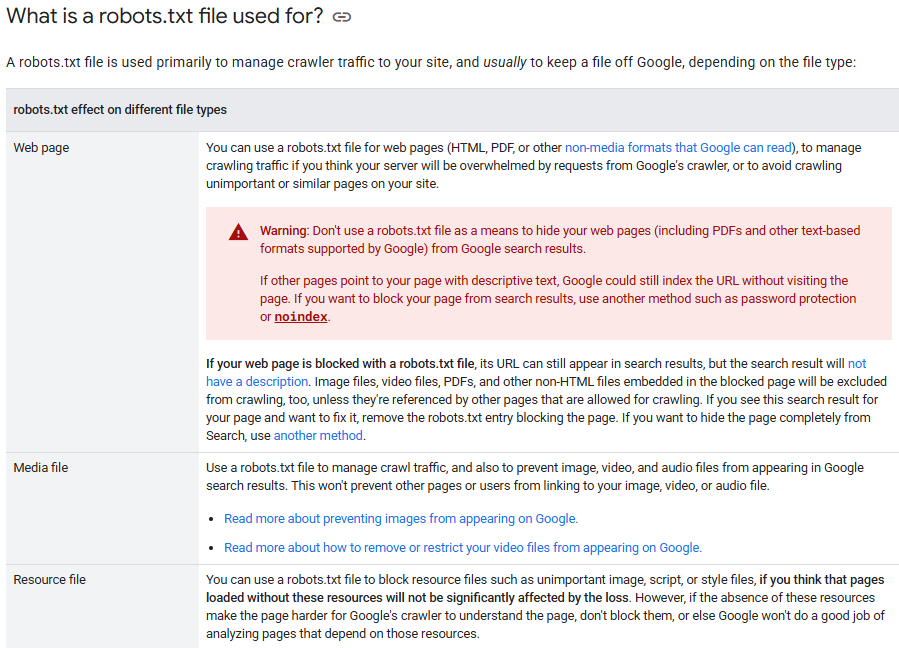

Google defines it as a mechanism to avoid page overloading and not keeping web pages out.

Do you know what is robot txt and how it impacts your website SEO? This blog finds what it is and how to create and optimize your Robot.txt file for better SEO.

What is Robots.txt and How Does It Impact SEO?

If you are wondering what is robot txt in SEO, it's like your website doorkeeper. The robots.txt file guides search engine crawlers on what pages or folders they can visit and what they cannot. Placed in your website root directory (like yourdomain.com/robots.txt), it specifies and restricts the crawler traffic to avoid any server overload.

Also Read: What Is Robots.txt & Why It Matters for SEO

Robots.txt SEO helps determine which URLs are eligible for crawling. It minimizes the crawl budget and prevents low-value or duplicate content indexing. It does not stop indexing (that is what noindex is for) but still provides clear instructions to the bots.

To understand how crawl control fits into a broader optimization strategy, explore our Technical SEO Complete Guide 2026.

Here are the common robots.txt directives to remember:

| Directive |

Function |

| User-agent |

Target all (*) or a specified crawler |

| Disallow |

Blocking specific URL or folder access |

| Allow |

Crawling allowability otherwise disallowed |

| Sitemap |

Directs crawler toward your sitemap |

Is Robots.txt Still Necessary for SEO in 2026?

Is robots txt necessary for SEO in 2026? More than ever. It helps search engines concentrate only on the best website content and skip the pages that don't need attention.

For example, an eCommerce site can block /cart/ or /checkout/ pages to save crawl budget and boost indexing of product pages.

It's a GPS for a search spider to show what's most important. Otherwise, it wastes time crawling the login pages, filters, or duplicate pages that degrade SEO performance.

An intelligent robots.txt file keeps your site cleaner, faster, and more visible on search engines.

How to Create a Robots.txt File for SEO

How to use robots txt for SEO? Robots.txt in SEO is used to control website crawling by search engines, thus making your efforts at SEO more meaningful. Here is a simple step-by-step guide:

Step 1: Create the file - Using a simple text editor like Notepad, create a file with title robots.txt. Keep formatting away from it.

Step 2: Add basic rules - Include directive

Such a setup aids eCommerce sites from crawling low-value or duplicate pages.

Step 3: Upload the file to your site's root to appear at https://www.example.com/robots.txt.

Step 4: Test your file using Google's robots.txt Tester to see if your rules are valid.

The significance of robots.txt file in SEO lies in directing search bots toward its key content. It saves the crawling budget and increases visibility, especially on sites with many dynamic or duplicate pages.

How Do I Submit Robots.txt to Google Search Console?

To submit your robots.txt file, upload it to your site’s root directory (e.g., https://www.example.com/robots.txt). Then, open Google Search Console, use the robots.txt Tester to check for errors, and request a recrawl if you’ve made updates. It helps Google read and follow your file correctly.

How to Optimize Robots.txt for Better Search Visibility

A well-structured robots.txt file in SEO guides search engines toward high-value content while blocking access to less essential or sensitive areas. Here is how to optimize it effectively:

1. Place It Correctly

Save your file with the correct title, i.e. robots.txt, and place it into your website root directory.

2. Use Wildcards & Symbols

Block patterns using the asterisk wildcard, like in /search/*. Restrict specific file extensions like PDF with the dollar sign, e.g., /*.pdf$.

3. Do Not Block Key Resources

Never block CSS or JavaScript files. Google requires them to understand the layout and content of a page.

4. Test & Update

Use Google's robots.txt Tester to find faults and rectify them with speed.

If WordPress, the robots.txt feature within the all-in-one SEO pack robots txt lets you edit-test without coding.

Also Read: Google’s New Robots.txt Update

Robots.txt vs. Sitemap.xml: What's the Difference?

Both robots.txt and sitemap.xml help with search engine visibility. However, these flies perform different functions.

A robots.txt SEO document informs search engines where they should not crawl, such as private folders or duplicate pages. Robots.txt files are placed in a site's root directory. It contains simple rules like Disallow to facilitate crawler behavior.

On the contrary, sitemap.xml is a guide to what crawlers should crawl. It includes your most important URLs with some metadata like the Last update date and priority.

Putting these two together will specify how search engines will crawl your site: robots.txt, which limits access, while sitemap.xml welcomes it.

| Feature |

Robots.txt |

Sitemap.xml |

| Purpose |

Blocks particular content access |

Highlights essential URLS |

| Format |

Plain text |

XML |

| Location |

Root directory (/robots.txt) |

Either Root or Search Console Submission |

| SEO Function |

Controls crawler behavior |

Enhance visibility |

What is a Robots.txt Generator and Should You Use One?

A robots.txt generator is simply an easy tool for creating robots.txt files without writing code lines over them. An interface will guide you about which portions of your website should be crawled by search engines and which portions should be blocked. You must create a correct syntax for this. It is especially useful for beginners or anyone who wants to save time avoiding errors.

When using robot txt yoast seo on WordPress, the in-house robots.txt editor makes it easy to customize rules in the SEO dashboard.

Should you? Yes, your robots.txt generators save you from typing the wrong rule and blocking essential content. These tools help you build the best robots txt for SEO when unsure whether a certain syntax is correct or how the crawler responds.

Try W3Era's Robots.txt Generator intelligent guided tool for all websites. You can create an SEO-friendly robots.txt file without technical proficiency.

Conclusion

Knowing and accurately using the robots.txt SEO can make a great deal of difference for your site for crawling and ranking by search engines. Whether a blog or an eCommerce store, or it could be a service site, a well-optimized robots.txt file will get the most out of your valuable content while keeping unnecessary pages out of it. Initial creation and submission with optimization and testing, take every step to improve your site's visibility and performance.

It is now simple with tools like W3Era's Robots.txt Generator. Take control of your crawl strategy and let search engines focus on what truly matters.